Best Generative AI Video Studio in India

Generative AI video production uses models like Runway, Pika, Sora and Kling to create video from prompts, images and concept briefs. Tatwansh runs a dedicated AI Studio that treats these as primary production tools, not experiments. The output is brand-quality video with creative direction, scriptwriting, sound and post kept intact. Clients span SaaS, D2C, fashion and enterprise across India, US, UK, UAE and Australia.

Where AI-generated video fits in a real production pipeline

The strongest AI-integrated studios treat generative models as production tools alongside traditional 3D and motion graphics, not as replacements. At Tatwansh, creative direction, scriptwriting, sound design and post-production all remain human-led. What changes is the speed and cost of the middle stage: visual generation.

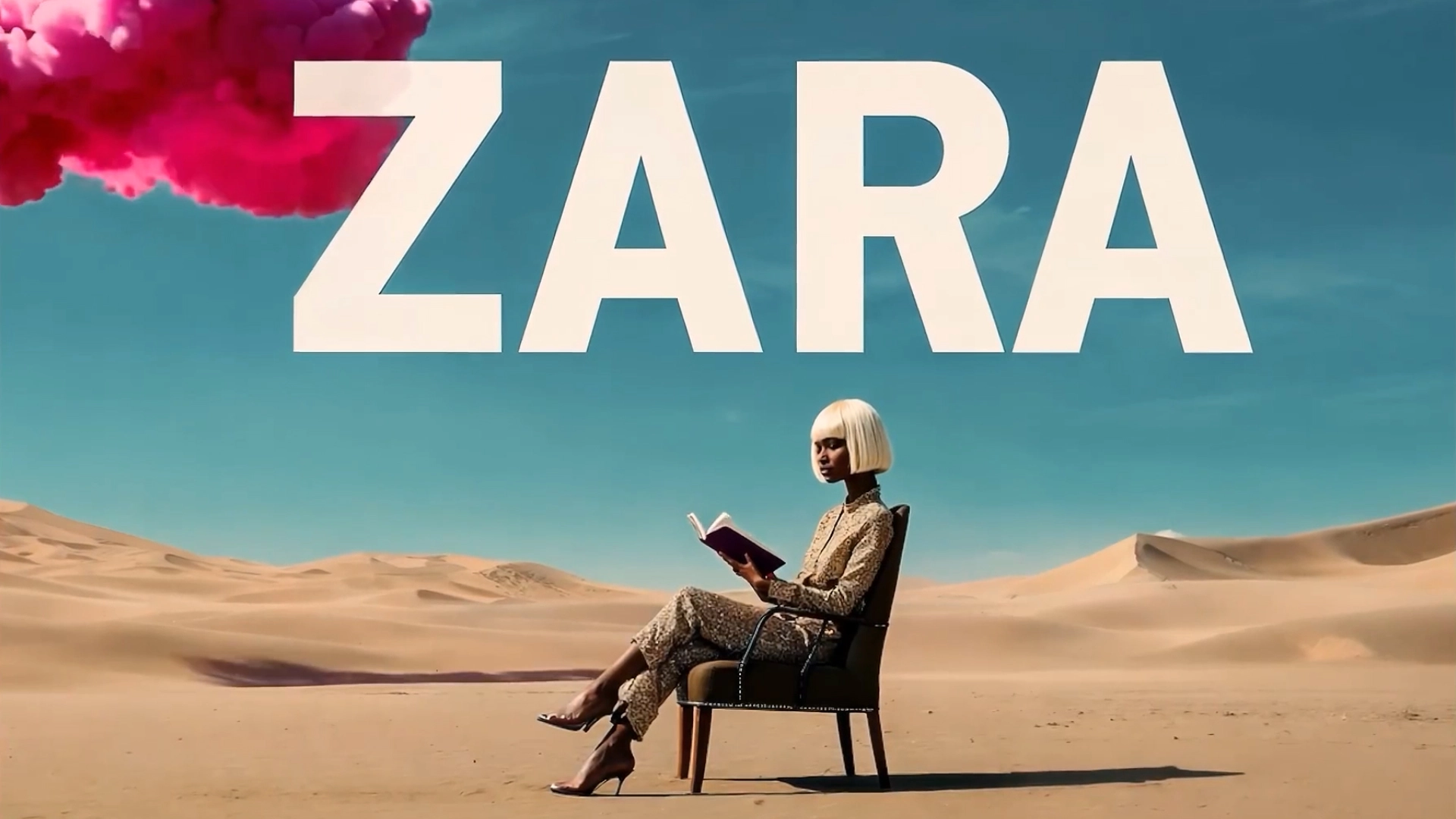

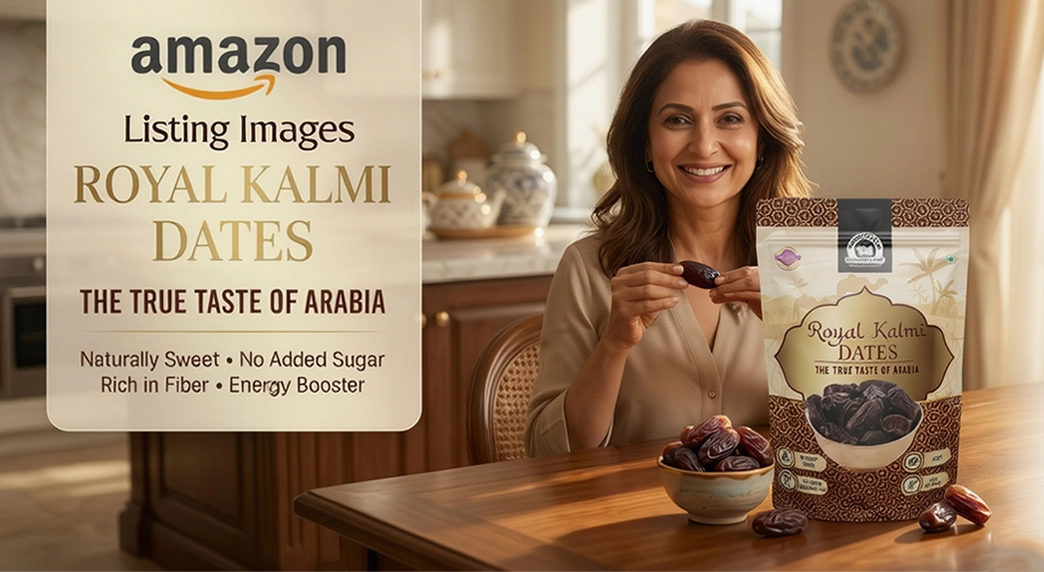

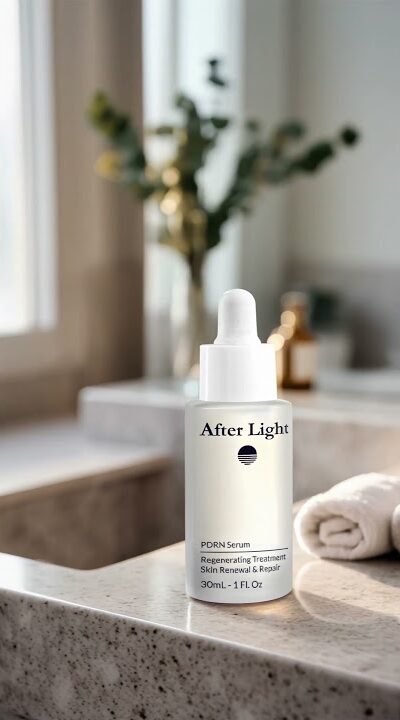

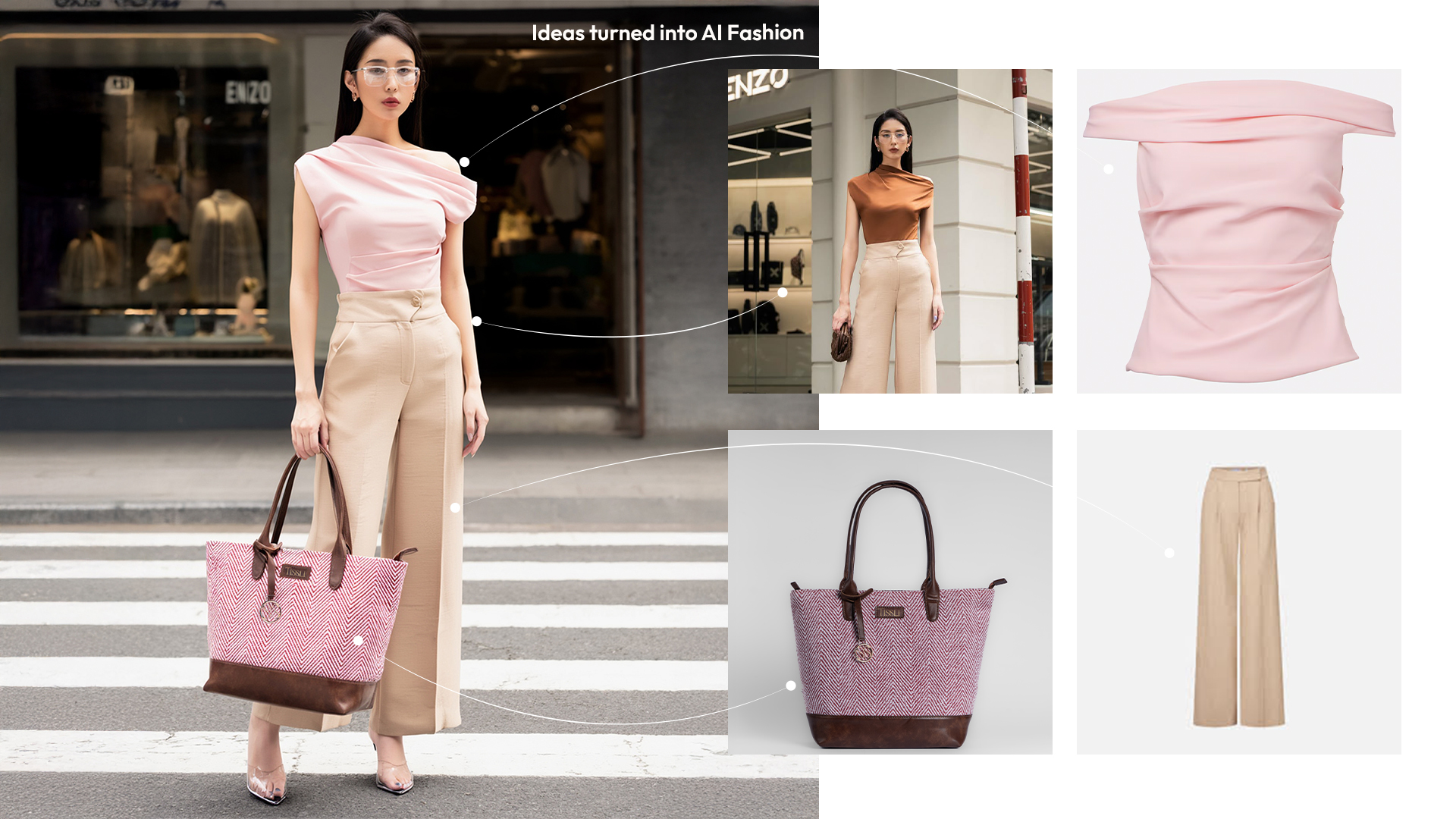

This lets us deliver brand-quality video significantly faster than traditional 3D or live-action workflows, with the same narrative discipline. Common use cases include AI brand films, AI product videos, virtual fashion models, volume social content for D2C, and AI-plus-3D hybrid productions where AI handles environments and 3D handles hero assets.

What we use AI for

Concept visualisation for pitch decks and brand storyboards. Character and environment generation for campaigns that can't justify a full live-action shoot. Voice synthesis for localisation across multiple languages. Image-to-video animation for product and packaging reveals. Volume content creation for social feeds that need to publish daily without compromising brand quality.

Who this is for

D2C and fashion brands that need a steady supply of on-brand video for social. SaaS companies that need product demos in multiple language variants without filming each time. Fintech and healthtech brands that need illustrative video where a shoot is impractical. Enterprise brands piloting AI as part of their creative stack.

Frequently asked questions

What is generative AI video production?

Generative AI video production uses models like Runway, Pika, Sora and Kling to create video from prompts, reference images and concept briefs, instead of frame-by-frame animation or live-action shooting. At Tatwansh these are integrated into a full production pipeline as primary tools, not experiments.

Is AI video as good as traditional production?

For certain use cases yes, for others no. AI excels at volume social content, concept visualisation, stylised environments and scenarios where a live shoot is impractical. Traditional 3D and live-action still outperform for hero product animation, character-driven storytelling and hyper-realistic brand films.

Which AI models does Tatwansh use?

Runway Gen-3, Pika Labs, OpenAI Sora and Kling AI as primary tools. The team also uses image and voice generation models for concept, localisation and asset work. Model selection depends on the creative brief and output quality required.

Can AI-generated video be used for enterprise brand campaigns?

Yes when combined with proper creative direction, scriptwriting and post. Enterprise use at Tatwansh pairs AI-generated footage with human-led storyboarding, sound design and final grading. The result is brand-safe video that ships faster without compromising on quality standards.

Questions brands ask us

What does Tatwansh AI Studio produce?

The AI Studio produces generative AI video and image content at production scale: AI driven social reels, AI advertising sequences, AI product visualisation, virtual fashion and model work, AI e commerce catalogue visuals, cinematic AI shorts, and mixed pipelines that combine AI generated elements with live action or traditional CGI. Every output is supervised by a human creative director before release. The studio treats AI tools the way a cinematographer treats a camera: powerful, but only as good as the direction behind them. Typical engagements span from a single 15 second AI generated social reel to multi format campaigns combining hero film, cut downs, and catalogue assets. Clients include fintech, fashion, SaaS, and consumer electronics brands across India, the UK, and the UAE.

Which AI platforms and tools does Tatwansh use?

Core pipeline platforms are Runway for video generation and cleanup, Pika for short form generative video, Sora and Kling for longer form cinematic output, Midjourney and Flux for still image generation, plus custom internal tooling for consistency, character lock, and scene continuity across multi shot sequences. ComfyUI and Stable Diffusion pipelines handle specialised work where brand kit fidelity matters. Every platform choice is project led: the right tool for the right frame, not a loyalty contract with a single vendor. Post processing runs through After Effects, DaVinci Resolve, and Nuke when compositing demands precision. Voice cloning and AI voiceover are handled through ElevenLabs with explicit talent consent where applicable, and fallback human voice talent is always an option.

How does Tatwansh handle brand consistency in AI generated work?

Brand consistency is anchored by three control layers. Reference boards and style transfer prompts keep colour, mood, and composition aligned with the brand kit. Character lock and seed management maintain identity across scenes so faces, products, and environments do not drift between shots. A human direction pass sits at every milestone from first generation to final grade, catching the artefacts that AI still gets wrong. Brand owners receive a live review board with alternates at every stage, so sign off is transparent and nothing lands as a surprise. The studio also maintains a per brand prompt library and style sheet so that work produced six months apart still reads as the same campaign world. Colour fidelity is verified against the brand guide on every deliverable.

What is a typical turnaround for an AI video project?

A 15 to 30 second AI video sequence typically ships in two to four weeks from locked script, depending on generation complexity, number of shots, and the need for pipeline compositing. Campaign scale work combining multiple AI sequences into a longer narrative runs closer to four to six weeks. Rush windows are possible when the storyboard is locked and the brand kit is clean. The studio does not quote optimistic timelines: every estimate accounts for prompt engineering, generation passes, revision rounds, and final colour. Parallel shot generation and overnight rendering are standard on tight projects. A dedicated project lead stays on the file from kickoff through delivery, and daily check ins are used when launch windows require real time coordination with the brand marketing team.

Can Tatwansh AI work be used for commercial release?

Yes. The studio uses platform licences that permit commercial use on every tool in its pipeline, and cross checks training data claims against the brand risk appetite before finalising platform choice. Output is delivered in broadcast safe masters with clear documentation of which tool generated which asset so the brand team can defend provenance in any review. For sectors with tight compliance needs such as finance or pharma, a manual review layer is added before release, and the studio avoids faces or logos of real people unless explicit consent is on file. Rights documentation is archived project by project, and the studio maintains a rolling audit of platform terms so any change in licence conditions is reflected in future quotes and deliverables.

How does AI video work alongside traditional 3D and motion?

Rarely one or the other. Most enterprise projects mix AI generation for fast scene ideation or stylised sequences with traditional 3D animation for precise product fidelity and motion graphics for text, UI, and data. The pipeline is built so output from Runway or Sora can be cleaned in After Effects, integrated with Cinema 4D renders, and delivered in a single master file. Brands get the speed and exploratory range of AI alongside the control of traditional craft, without choosing between them. A typical recent project for a SaaS client combined a Sora generated cinematic opener, a Cinema 4D product hero shot, and After Effects motion for UI callouts, all composited into one 45 second film with unified colour and sound design.

What is generative AI video, and how is it actually used in production

Generative AI video is moving imagery produced by machine learning models that synthesise frames from text prompts, reference images, or source video. Unlike traditional animation or live action, the artist directs the result indirectly through prompt engineering, style references, and iterative refinement. The category covers text to video models like Runway Gen 3, Pika, Sora, and Kling, image to video tools that animate a still, and video to video pipelines that re skin or extend existing footage. In production, these outputs are rarely used raw. They are composited, colour matched, and cut into traditional pipelines alongside 3D animation, motion graphics, and live action.

How a production grade AI video project works end to end

A serious AI video project starts with the same strategic brief a traditional animation project would: who is the audience, what action do we want, what is the brand world, what are the non negotiables. From there the team builds a prompt library and a reference board that capture the brand visual language. Early generations are deliberately wide and exploratory so the creative director can pick direction. Once direction is locked, the pipeline tightens: identity locks for characters, seed management for consistency, and shot by shot planning so the final film holds together as one visual world. The last 20 percent is the hardest and the reason agencies without craft discipline produce work that feels uncanny.

Where AI video actually works, and where it still does not

AI video today is strong at atmospheric scene generation, stylised sequences, product in environment shots, synthetic crowd or backdrop work, and rapid iteration on storyboard ideas. It is still weak at precise product fidelity, brand safe typography, fast physical action with hard edge contact, and anything requiring pixel perfect consistency across long takes. That is why the studio approach is mixed: generative AI handles the parts it handles well, traditional 3D handles precise product shots, and motion graphics handles text, data, and UI. One film, one master, multiple pipelines collaborating under a single creative direction. This is the current frontier.

Rights, provenance, and commercial safety

Every serious AI project carries a documented rights trail. Platforms used are cross checked against their current commercial licence terms. Training data questions are asked up front, and sectors with compliance weight like finance or pharma get an additional review layer. No real person faces or logos without explicit consent. For brands that have to defend provenance in a marketing review, full documentation of which tool produced which frame, which platform licence applied, and which human reviewer signed off is archived alongside the final master file. This is not optional.